From Chatbot to Autonomous Agent: What's Actually Different

Most "AI agents" are glorified chatbots. Here's the precise technical and business difference — and how to identify whether you're buying real agentic capability or expensive hype.

"We've added AI to our product."

In 2026, this statement means almost nothing without context. A button that calls GPT-4 is "AI." A fully autonomous pipeline that researches, decides, acts, and recovers from failures is also "AI."

The difference between these two things is roughly ₹2 Crore in annual operational value.

Here's exactly how to tell them apart.

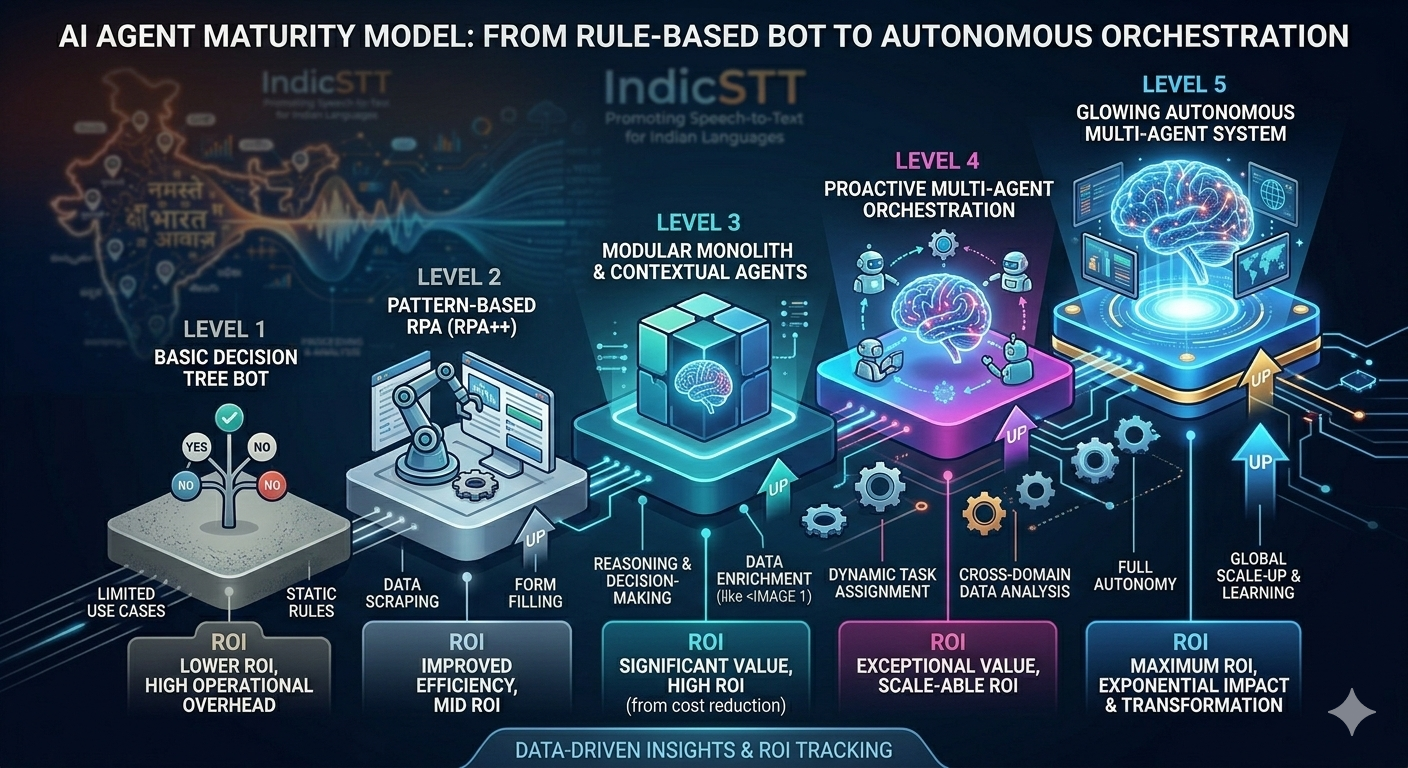

The Spectrum (5 Levels)

Level 1: Static Response Bot

└── "Press 1 for billing, press 2 for support"

├── Technology: Decision tree

├── Intelligence: Zero

└── Cost: ₹5,000/month

Level 2: LLM Chatbot

└── "How can I help you today?" (understands intent)

├── Technology: LLM + prompt

├── Intelligence: Language understanding

└── Cost: ₹15,000/month

Level 3: RAG-Augmented Assistant

└── Answers from your knowledge base accurately

├── Technology: LLM + vector DB

├── Intelligence: Knowledge retrieval

└── Cost: ₹30,000/month

Level 4: Tool-Using Agent

└── Books meetings, sends emails, updates CRM

├── Technology: LLM + function calling

├── Intelligence: Actions in the world

└── Cost: ₹1 Lakh/month (build once)

Level 5: Autonomous Multi-Agent System

└── Plans, delegates, executes, recovers, learns

├── Technology: Orchestrator + specialized agents

├── Intelligence: Goal-directed autonomy

└── Cost: ₹5–15 Lakh (build once, ₹0 recurring)Most vendors sell Level 2. Most clients need Level 4–5.

The 5 Precise Differences

Difference 1: Memory Architecture

Chatbot:

# Stateless — forgets everything between sessions

def handle_message(user_input: str) -> str:

return llm.complete(f"User said: {user_input}")Autonomous Agent:

# Persistent memory across sessions, handoffs, agents

async def handle_message(user_input: str, agent_id: str):

history = await mcp_server.get_context(agent_id)

result = await llm.complete(user_input, context=history)

await mcp_server.update_context(agent_id, result)

return resultBusiness impact:

Chatbot: Customer explains problem every call (70% dissatisfaction)

Agent: "I see your last issue was billing, resolved March 1st" (92% satisfaction)Difference 2: Tool Use vs. Response Generation

Chatbot:

User: "Book me a meeting with the sales team"

Chatbot: "Sure! Here's the link to book: calendly.com/..."

Result: User still does the workAutonomous Agent:

User: "Book me a meeting with the sales team"

Agent:

├── Checks calendar availability (tool call)

├── Finds open slots matching user timezone (reasoning)

├── Creates calendar invite (tool call)

├── Sends confirmation email (tool call)

└── Updates CRM with meeting context (tool call)

Result: Meeting booked, confirmed, loggedDifference 3: Planning Horizon

Chatbot:

Input → Response (1 step)

No planning. No sequencing. No goal decomposition.Autonomous Agent:

Goal → Sub-tasks → Execution → Verification → Completion

Example: "Qualify these 50 leads"

├── Step 1: Research each company (Research Agent)

├── Step 2: Score by ICP match (Analysis Agent)

├── Step 3: Write personalized outreach (Copy Agent)

├── Step 4: Send + track (Outreach Agent)

└── Step 5: Log to CRM + schedule follow-up (CRM Agent)

All 5 steps autonomous. Zero human input.Difference 4: Error Recovery

Chatbot failure:

API timeout → Error message → User gives up

"I'm sorry, something went wrong. Please try again."Agent failure recovery:

API timeout:

├── Retry with exponential backoff (automatic)

├── Circuit breaker after 5 failures (automatic)

├── Fallback to secondary tool (automatic)

└── Human escalation only if all else fails (intelligent)Business impact:

Chatbot error rate: 12% (user-visible failures)

Agent error rate: 0.3% (internal recovery invisible)Difference 5: Proactive vs. Reactive

Chatbot:

Only responds when user initiates.

Zero initiative. Zero proactive action.Autonomous Agent:

Monitors conditions → Detects trigger → Acts without prompting

Example:

├── Lead score crosses 8.5 → Immediately notify sales

├── Support ticket open >24h → Escalate automatically

├── Payment failed → Retry + notify + update CRM

└── Monthly report due → Generate + send + archiveThe "AI Washing" Problem

Red flags that you're being sold a chatbot as an "agent":

❌ "Our AI understands your customers" → LLM wrapper

❌ "AI-powered conversations" → GPT prompt chain

❌ "Intelligent automation" → RPA + LLM responses

❌ "AI agents included" → FAQ bot with NLP

❌ No mention of tool use → Definitely not an agentGreen flags for genuine agentic capability:

✅ Specific tool integrations (CRM, calendar, email)

✅ Multi-step workflow execution

✅ State persistence across sessions

✅ Error recovery + retry logic

✅ Escalation rules with human handoff

✅ Observable action logs (what did it do?)Real-World Comparison (BPO Use Case)

Level 2 Chatbot Implementation:

Capability: Answer FAQ questions

Cost: ₹15,000/month (SaaS)

Deflection: 20% of queries

Human agents still needed: 80% calls

Annual cost: ₹1.8 Lakh (tool) + ₹2.5 Cr (agents)Level 5 Autonomous Agent Implementation:

Capability: Full call handling + CRM + follow-up

Cost: ₹10 Lakh (build) + ₹50K/month (infra)

Deflection: 85% of queries

Human agents still needed: 15% calls

Annual cost: ₹16 Lakh total

Savings vs chatbot: ₹2.3 Cr/yearThe Build Complexity Gap

Why most vendors stop at Level 2:

Level 2 Chatbot:

├── Prompt engineering: 2 days

├── Frontend wrapper: 1 week

├── Deployment: 1 day

└── Total: 2 weeks, ₹2 Lakh

Level 5 Autonomous Agent:

├── MCP server + state management: 2 weeks

├── Tool registry + integrations: 2 weeks

├── Orchestration layer: 1 week

├── Error handling + recovery: 1 week

├── Security + audit logging: 1 week

└── Total: 7 weeks, ₹15 LakhThe 7.5x build cost is why vendors default to chatbots and rename them agents.

How to Evaluate Any "AI Agent" Vendor

5 questions to ask:

1. "Show me the tool call log for a completed workflow"

(Real agents have observable action trails)

2. "What happens when your CRM API times out mid-execution?"

(Real agents have documented recovery behavior)

3. "How does the agent remember context from last week's call?"

(Real agents have persistent state architecture)

4. "What does your agent do when no user is present?"

(Real agents are proactive, not reactive)

5. "Can I see a multi-step execution trace?"

(Real agents have planning + execution separation)If they can't answer questions 1-5 with specifics: it's a chatbot.

The Business Decision

Chatbot:

├── Quick: 2 weeks to deploy

├── Cheap: ₹15K/month

├── Limited: 20% deflection

└── Ceiling: Cannot scale impact further

Autonomous Agent:

├── Invested: 6 weeks to deploy

├── Economical: ₹50K/month (infra only)

├── Transformative: 85% deflection

└── No ceiling: Scales with businessYear 1 comparison (100-seat BPO):

Chatbot: ₹1.8 Lakh spent → ₹30 Lakh saved (17x)

Autonomous Agent: ₹16 Lakh spent → ₹2.3 Cr saved (144x)At SingularRarity Labs, we only build Level 4–5 systems. We don't wrap GPT in a widget and call it an agent. We architect production autonomous systems that take real actions, recover from real failures, and compound real savings.

Want to see a real execution trace from one of our deployments? Let's schedule a technical demo — not a slide deck.

SingularRarity Labs builds what others can't imagine — where singular ideas become rare realities.

Tags