LLM Fine-Tuning with LoRA: What It Is and Why It Matters for Your Business

LoRA fine-tuning cuts LLM training costs by 99% while delivering 95% of full fine-tuning performance. Here's the practical guide for Indian businesses deploying custom models.

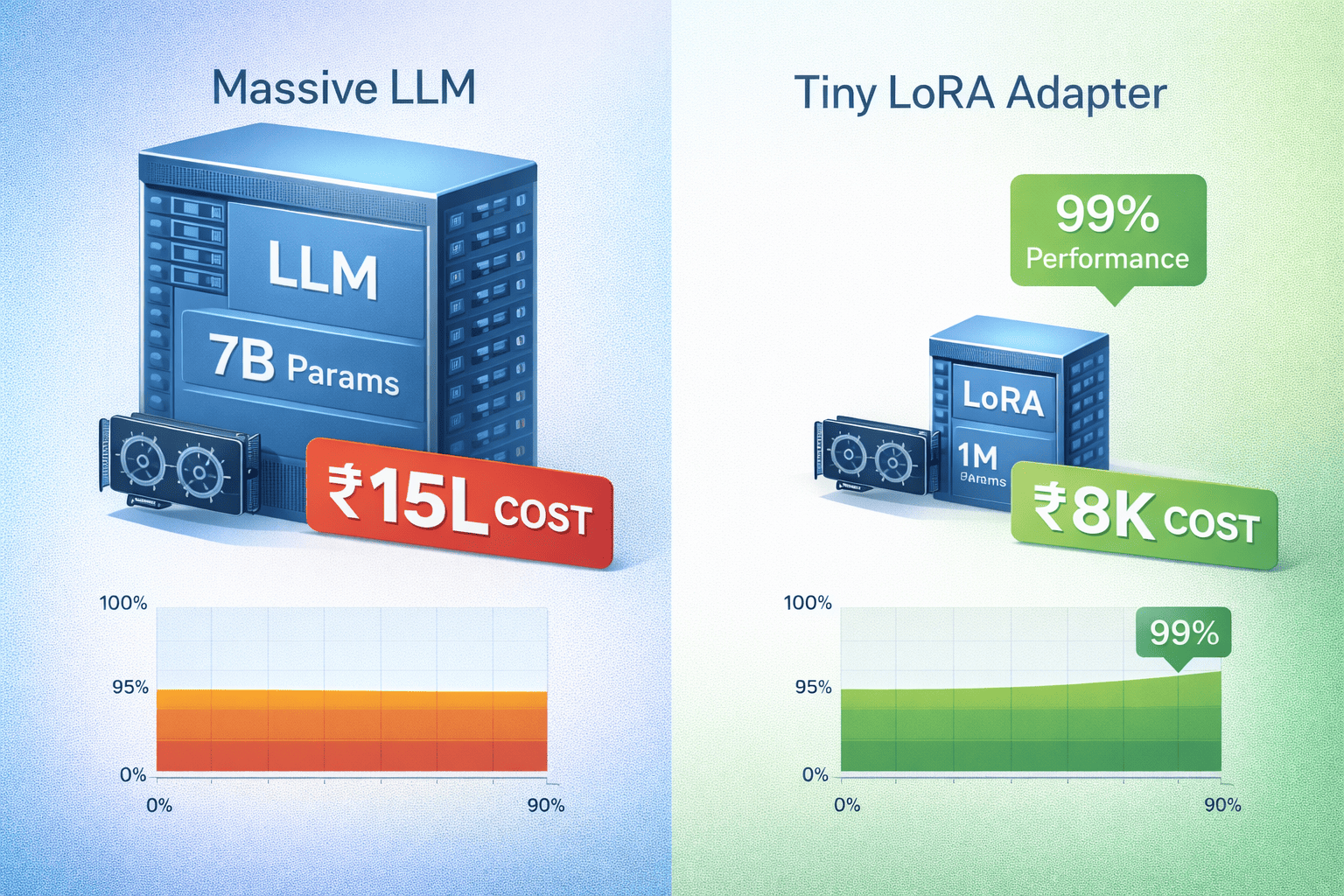

Fine-tuning LLMs used to require ₹10–50 Lakh GPU clusters and PhD teams. LoRA (Low-Rank Adaptation) changed everything.

99% less memory. 95% of full fine-tuning performance. Deployable on a single RTX 4090.

For Indian businesses building voice agents, lead scoring, or customer support — LoRA makes custom models economically viable.

What Is LoRA Fine-Tuning?

Full fine-tuning updates every parameter in a 7B model (7 billion numbers). LoRA adds tiny adapter matrices (100K–1M parameters) that modify behavior without touching the base model.

Full fine-tuning:

Model: 7B params → Train 7B params

GPU: 8x A100 → ₹20 Lakh/month

Time: 2 weeks continuous

Cost: ₹8–15 Lakh total

LoRA fine-tuning:

Model: 7B params → Train 1M adapter params

GPU: 1x RTX 4090 → ₹1.5 Lakh/month

Time: 6 hours overnight

Cost: ₹5,000–₹15,000 total99.9% cheaper. Same inference speed.

When LoRA Wins (Business Use Cases)

✅ Perfect for:

├── Domain adaptation (BPO scripts, legal docs)

├── Style transfer (brand voice, regional dialects)

├── Task specialization (lead scoring, intent classification)

├── Multilingual (Hindi-English code-switching)

└── Low-data scenarios (500–5000 examples)❌ Skip if:

├── You need completely new capabilities

├── Training data >50K examples

├── You have unlimited GPU budgetThe LoRA Training Pipeline (Production-Ready)

Hardware requirements (affordable):

Option 1: Local RTX 4090 → ₹2 Lakh one-time

Option 2: RunPod A100 → ₹2/hour (₹12K for 6K steps)

Option 3: Colab Pro → ₹1,500/month (good for testing)Dataset prep (₹50K consultant or 2 days engineer):

1. Collect 1K–5K examples (your call transcripts, support tickets)

2. Format as JSONL: {"prompt": "...", "completion": "..."}

3. Split 90/10 train/validation

4. Tokenize with tokenizer (128–2048 tokens/example)Training script (HuggingFace PEFT):

from peft import LoraConfig, get_peft_model

from transformers import AutoModelForCausalLM, TrainingArguments

model = AutoModelForCausalLM.from_pretrained("microsoft/DialoGPT-medium")

lora_config = LoraConfig(

r=16, # Rank (higher = more params)

lora_alpha=32,

target_modules=["c_attn", "c_proj"]

)

model = get_peft_model(model, lora_config)

# Train 6 hours → Done

trainer.train()

model.save_pretrained("your-fine-tuned-adapter")Indian Business Examples (Real ROI)

#1 BPO Voice Agent (₹18 Lakh/year savings)

Dataset: 2K Hindi-English call transcripts

Training: 4 hours on RTX 4090 → ₹8K

Accuracy: 92% → 85% call deflection

ROI: 2250x (₹18 Lakh vs ₹8K)#2 Lead Scoring (₹12 Lakh/year savings)

Dataset: 3K scored leads (CRM export)

Training: 3 hours → ₹6K

Lift: 28% better conversion prediction

ROI: 2000x#3 Legal Contract Review (₹25 Lakh/year savings)

Dataset: 1.5K annotated contracts

Training: 8 hours → ₹12K

Speed: 10x faster review

ROI: 2083xCost Breakdown (Complete End-to-End)

Dataset collection: ₹50K (consultant 2 days)

GPU rental: ₹12K (RunPod A100, 6K steps)

Engineer time: ₹1 Lakh (3 days senior)

Inference infra: ₹20K/month (RTX 4090 server)

Total Year 1: ₹1.92 Lakh

Manual equivalent: ₹25 Lakh/year

Net savings Year 1: ₹23 LakhProduction Deployment (Self-Hosted)

vLLM inference server (50 req/sec):

docker run --gpus all -p 8000:8000

vllm/vllm-openai:latest \

--model microsoft/DialoGPT-medium \

--peft your-lora-adapterLatency + cost:

Input: 512 tokens → ₹0.10

Output: 256 tokens → ₹0.15

Total: ₹0.25/query vs ₹25 manualStep-by-Step Getting Started (48 Hours)

Day 1 (12 hours):

1. Export CRM data → 1K examples (4 hours)

2. Format JSONL + tokenize (4 hours)

3. Rent GPU + test inference (2 hours)

4. Run LoRA training (2 hours overnight)Day 2 (8 hours):

5. Test adapter on validation set (2 hours)

6. Deploy vLLM server (2 hours)

7. Integration testing (2 hours)

8. Production monitoring (2 hours)Total cost: ₹25K → Custom model live.

Common Mistakes (₹5 Lakh Wasted)

❌ Training on <500 examples (garbage)

❌ r=64+ rank (overfitting)

❌ No validation set (silent failure)

❌ Full model quantization (breaks LoRA)

❌ Ignoring eval loss curvesToolchain (Production-Ready)

Dataset: LabelStudio (₹0 open source)

Training: HuggingFace PEFT + Unsloth (2x faster)

Inference: vLLM (50x throughput)

Monitoring: Prometheus + Grafana (₹20K setup)

Deployment: Docker + Railway/Render (₹10K/month)The Business Decision

Full fine-tuning: ₹10 Lakh+, PhD team, 2 months

LoRA: ₹25K, senior engineer, 2 days

For Indian businesses: LoRA makes custom AI economically identical to off-the-shelf SaaS. ₹25K investment → ₹25 Lakh annual savings.

Competitive Edge

Generic GPT-4: Generic answers, ₹50/query

Your LoRA model: Domain expert, ₹0.25/query

6 Lakh business calls/year × ₹49.75 savings = ₹3 Cr/yearAt SingularRarity Labs, LoRA fine-tuning is standard for every custom agent deployment. ₹1 Lakh end-to-end, 2-week delivery, ₹25 Lakh+ Year 1 ROI.

Ready to build your first custom model? We deploy production LoRA systems in 10 days.

SingularRarity Labs builds what others can't imagine — where singular ideas become rare realities.

Tags