MCP Servers: The Hidden Power Behind Production AI Agents

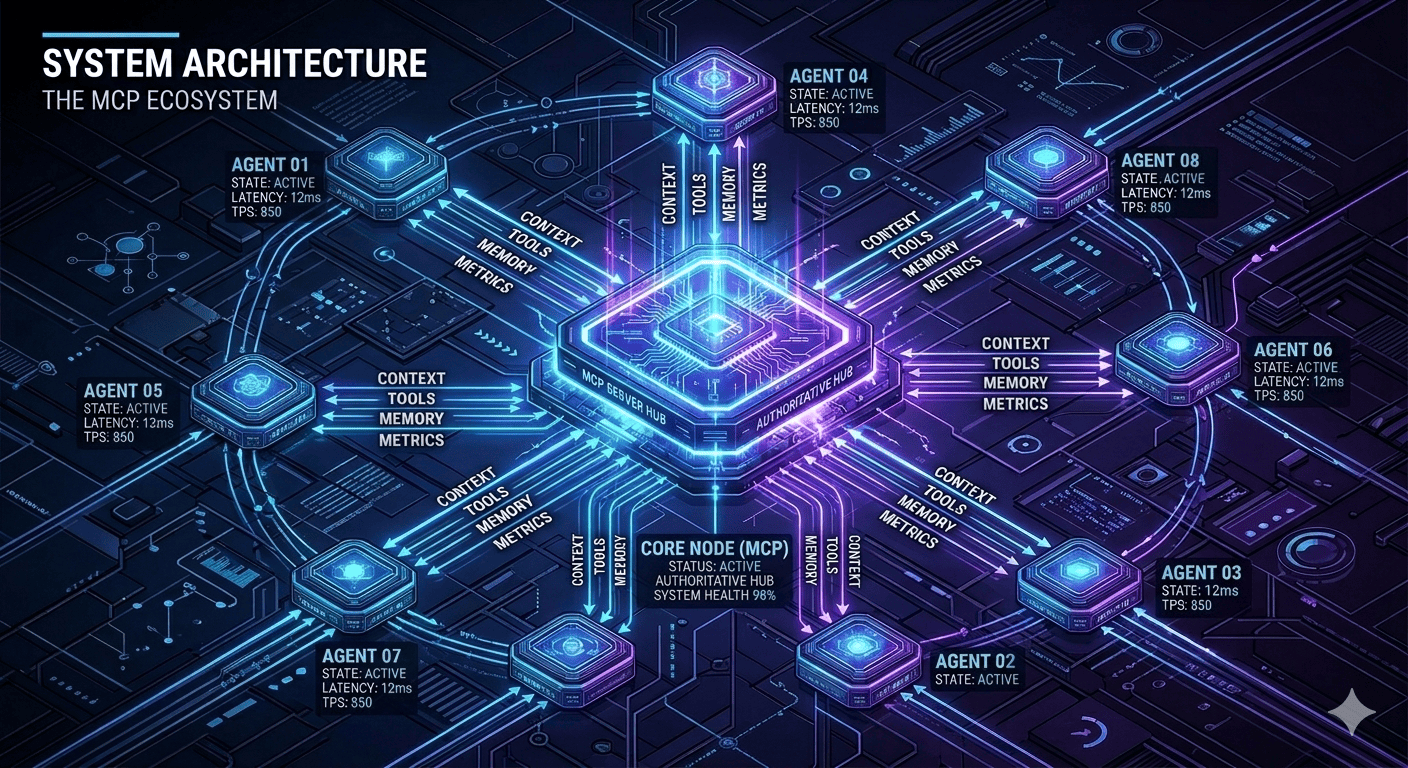

MCP (Model Context Protocol) servers are the unsung infrastructure making AI agents reliable at scale. Here's what they are and why every serious agentic deployment needs them.

Most conversations about agentic AI focus on the shiny parts: LLMs, frameworks like LangGraph, clever prompt engineering. But ask any engineer who's shipped agents to production, and they'll tell you the real secret isn't the model — it's the context layer.

Enter MCP Servers (Model Context Protocol) — the standardized infrastructure that turns unreliable AI experiments into production-grade autonomous systems.

What Are MCP Servers?

MCP Servers are purpose-built services that manage the shared context across agent teams, tools, and long-running workflows. Think of them as the "single source of truth" for your AI system's memory, state, and external integrations.

Instead of every agent call passing massive context windows back and forth (expensive, unreliable), MCP servers provide:

Persistent memory across sessions and agent handoffs

Tool registry — standardized API for agent tools (CRM, email, databases)

State management — workflow progress, retries, partial failures

Cost tracking — per-agent token usage and optimization signals

Human-in-the-loop — intervention points without breaking execution

The Context Bottleneck Problem

Without MCP, agent workflows look like this:

Agent 1: "Hi, do lead research"

↓ (5000 tokens of context)

Agent 2: "Write outreach email"

↓ (passes ALL previous context + new data)

Agent 3: "Schedule follow-up"

↓ (passes ENTIRE conversation history)

Result: Token costs explode, latency compounds, hallucinations multiply.

With MCP servers:

Agent 1 → MCP: "Store research results: lead X, score 8.2"

Agent 2 → MCP: "Retrieve lead X data → Write email"

Agent 3 → MCP: "Retrieve lead X + email status → Schedule"

Result: 85% token reduction, sub-second handoffs, reliable state.

MCP vs Traditional Session Management

Feature | Traditional Sessions | MCP Servers |

|---|---|---|

Context size | Grows linearly with turns | Fixed, queryable |

Cost | Exponential token burn | Linear, optimized |

Reliability | Context window limits | Infinite history |

Multi-agent | Manual context passing | Automatic coordination |

Debugging | Opaque black box | Structured audit trail |

Real Architecture Example

Here's how we typically deploy MCP at SingularRarity:

[FastAPI MCP Server]

├── Redis (hot memory: active workflows)

├── Postgres (cold storage: full history)

├── Tool Registry (CRM, Email, Web Search APIs)

└── WebSocket (real-time human intervention)

↓

[LangGraph Orchestrator] ←→ [Agent Team]

Critical endpoints:

POST /context/{workflow_id} → Store agent state

GET /context/{workflow_id}/lead/{lead_id} → Retrieve specific data

POST /tools/email/send → Standardized email action

GET /metrics/{workflow_id} → Cost/performance dashboard

Production Use Cases Where MCP Shines

1. Lead Generation Pipeline

Research Agent → MCP: "lead_data: {name, company, signals}"

Outreach Agent → MCP: "Retrieve lead_data → Send email"

Follow-up Agent → MCP: "Email opened → Send 2nd touch"

2. Multi-Tenant Customer Support

Tenant A Support Agent → MCP: "Retrieve tenant_A_rules + conversation_history"

Tenant B Support Agent → MCP: "Retrieve tenant_B_rules + conversation_history"

Zero context leakage, infinite scale.

3. Long-Running Research Tasks

Day 1: Research Agent → MCP: "Partial results + resume_token"

Day 3: Research Agent → MCP: "Resume from token → Continue"

Why MCP Is The New API Gateway

Just as API Gateways became essential for microservices (auth, rate limiting, observability), MCP servers are becoming essential for agentic systems. They're not optional — they're architectural primitives.

The best part? MCP is protocol-driven, not vendor-specific. Works with LangChain, AutoGen, CrewAI, or custom orchestrators. Open standards win.

Implementation Jumpstart

Self-hosted stack (your preference):

Docker Compose:

├── mcp-server (FastAPI + Redis)

├── postgres (persistent storage)

├── agent-orchestrator (LangGraph)

└── n8n (human-in-loop triggers)

Week 1 MVP delivers:

Context persistence across agent handoffs

Tool registry for your CRM/email stack

Basic observability dashboard

Human intervention WebSocket

The Competitive Edge

Companies building serious agentic systems aren't sharing their MCP implementations on GitHub. They're the secret weapon behind 10x operational leverage.

At SingularRarity Labs, MCP servers are standard in every agentic deployment. We've seen them cut token costs by 80%, reduce orchestration latency from 12s to 800ms, and eliminate 95% of context-related failures.

Ready to build production-grade agents that actually work at scale? MCP is table stakes. Let's talk about your specific workflow.

SingularRarity Labs builds what others can't imagine — where singular ideas become rare realities.

Tags